Reasoning models are one of the most important developments in AI because they change what the model is doing underneath the interface.

Traditional large language models are very good at predicting the next likely word. That can produce impressive results, but it also creates obvious limits. The model may sound fluent without having really worked through the problem in a structured way.

Reasoning models take a different approach. Rather than jumping straight to an answer, they work through the problem in stages. They break down tasks, decide what information they need, use tools where appropriate, and refine their answer as they go.

That makes them much more useful for work that involves analysis, planning, decision-making, and multi-step problem solving.

How reasoning models differ from standard LLMs

A standard LLM is often best understood as a powerful pattern-matcher. It predicts plausible continuations based on what it has seen before.

A reasoning model still relies on the same broad machine learning foundation, but it is optimised to do more deliberate work before answering. In practice, that can involve:

- decomposing a problem into sub-steps

- checking intermediate reasoning

- choosing when to use tools such as browsing, code execution, or image analysis

- incorporating fresh evidence during the task

- revising the answer when the first pass is not good enough

This gives the model a more methodical working style. Instead of producing the first plausible response, it is more likely to build toward an answer.

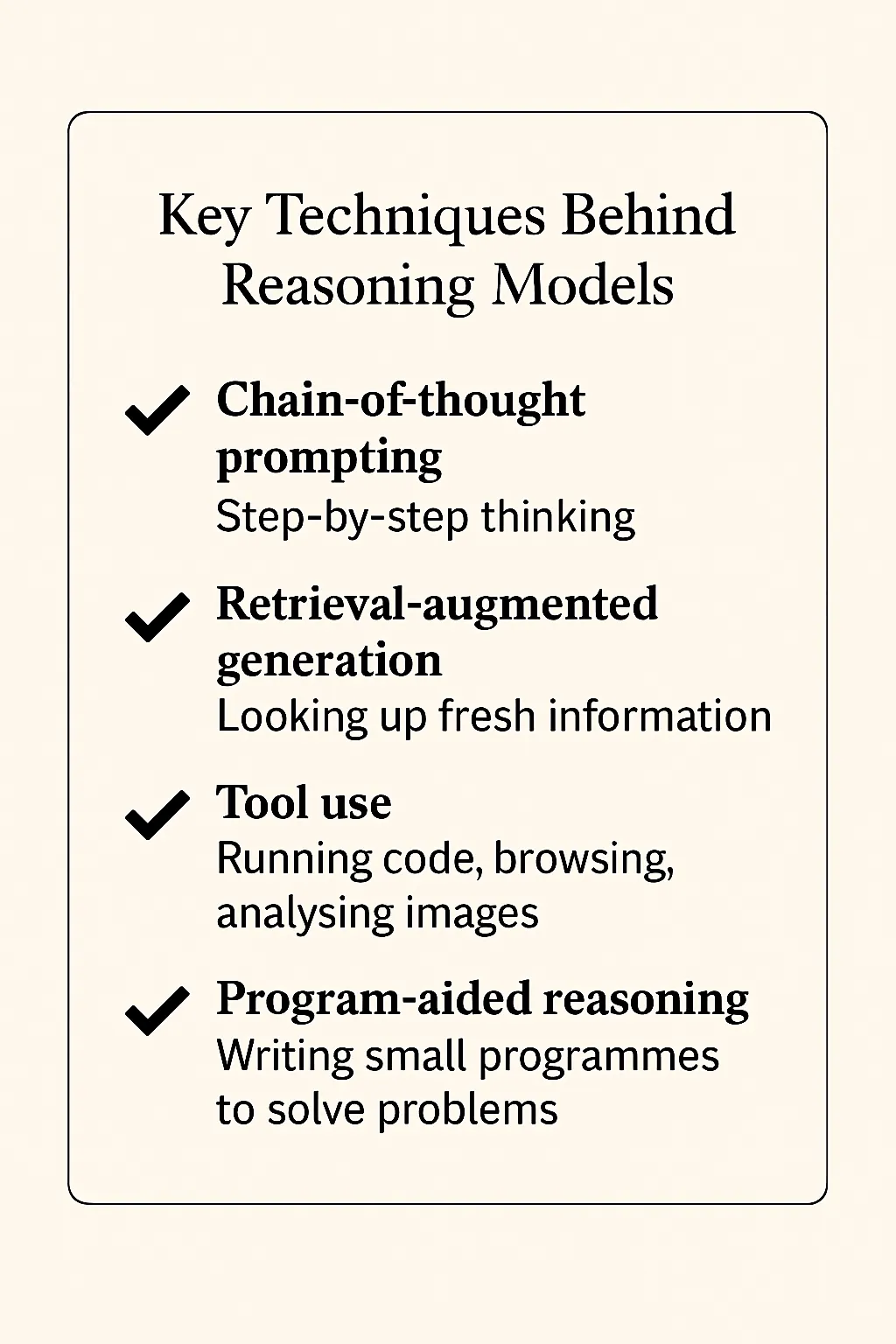

The techniques that make this possible

Modern reasoning systems often combine several techniques.

Chain-of-thought style decomposition

The model tackles a complex problem step by step rather than trying to answer in a single leap.

Retrieval-augmented generation

The model can bring in new information from source material instead of relying only on what was in training data.

Tool use

The model can decide to browse the web, run code, inspect a file, or analyse an image when that would improve the answer.

Program-aided reasoning

For some tasks, the model can write and execute small pieces of code to calculate, test, or verify results.

Taken together, these techniques make the model more adaptable and more reliable on complex tasks.

What reasoning models are good at

Reasoning models are strongest where the work is multi-step and the quality of the process matters, not just the fluency of the output.

That includes:

- analysing long or complex documents

- comparing competing options against criteria

- planning a project or workflow

- debugging code

- conducting structured research

- synthesising evidence from multiple sources

- building scenario analyses and forecasts

The common pattern is that the model is not just retrieving information. It is combining information, checking it, and moving through the problem in a more disciplined way.

Why they matter for business use

For many organisations, the first wave of AI adoption focused on lightweight tasks:

- drafting emails

- summarising notes

- brainstorming ideas

- rewriting text

Those are still useful, but they only capture a small part of the opportunity.

Reasoning models push AI further into work that previously required more human judgement and structure. They are useful for:

HR and legal teams

Comparing policies, analysing regulations, and reviewing complex documents with more structured logic.

Analysts and economists

Running multi-step research, combining evidence, and testing scenarios with greater rigour.

Finance teams

Working through forecasting, reviewing assumptions, and interrogating outputs more systematically.

Product and operations teams

Planning work, evaluating trade-offs, and building clearer decision paths.

Software teams

Explaining errors, debugging step by step, and generating code with stronger reasoning around the task.

In all of these cases, the advantage is not just that the model can answer. It is that it can work through the path to the answer more carefully.

OpenAI's o3 showed where this is heading

OpenAI's o3 model, released in April 2025, was an early high-profile example of this shift.

The important point was not simply that it was "smarter". It was that the model could:

- think for longer before responding

- decide when to browse, code, or inspect images

- combine tools inside a single task

- gather and reason over new information rather than relying only on memory

That matters because it starts to move AI from static response generation toward dynamic problem solving.

What reasoning models do not change

Reasoning models are not magic.

They can still:

- hallucinate

- use poor sources

- overcomplicate simple tasks

- arrive at polished but wrong conclusions

They are also not always the right tool. For simple drafting or straightforward transformation tasks, a standard model may be faster and more efficient.

The key is matching the model to the problem.

The real takeaway

Reasoning models matter because they make AI more useful for the kinds of work professionals actually care about: work with ambiguity, trade-offs, evidence, and multiple steps.

They are not just better autocomplete. They are a move towards systems that can plan, inspect, verify, and adapt as they work.

That does not remove the need for human judgement. It raises the value of it.

The organisations that benefit most will be the ones that learn where reasoning models genuinely improve outcomes, where they still need oversight, and how to design workflows that use them deliberately.